1. Topic 1, Proseware Inc

Background

Proseware, Inc, develops and manages a product named Poll Taker. The product is used for delivering public opinion polling and analysis.

Polling data comes from a variety of sources, including online surveys, house-to-house interviews, and booths at public events.

Polling data

Polling data is stored in one of the two locations:

- An on-premises Microsoft SQL Server 2019 database named PollingData

- Azure Data Lake Gen 2 Data in Data Lake is queried by using PolyBase

Poll metadata

Each poll has associated metadata with information about the poll including the date and number of respondents. The data is stored as JSON.

Phone-based polling Security

- Phone-based poll data must only be uploaded by authorized users from authorized devices

- Contractors must not have access to any polling data other than their own

- Access to polling data must set on a per-active directory user basis

Data migration and loading

- All data migration processes must use Azure Data Factory

- All data migrations must run automatically during non-business hours

- Data migrations must be reliable and retry when needed

Performance

After six months, raw polling data should be moved to a lower-cost storage solution.

Deployments

- All deployments must be performed by using Azure DevOps. Deployments must use templates used in multiple environments

- No credentials or secrets should be used during deployments

Reliability

All services and processes must be resilient to a regional Azure outage.

Monitoring

All Azure services must be monitored by using Azure Monitor. On-premises SQL Server performance must be monitored.

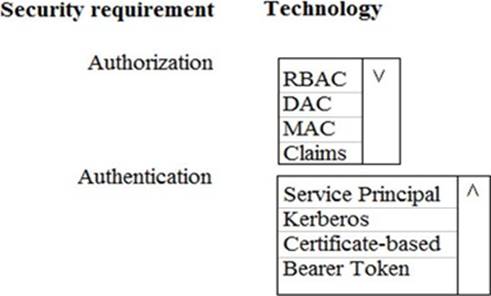

HOTSPOT

You need to ensure that Azure Data Factory pipelines can be deployed.

How should you configure authentication and authorization for deployments? To answer, select the appropriate options in the answer choices.

NOTE: Each correct selection is worth one point.