17. Topic 3, Case study 2

This is a case study. Case studies are not timed separately. You can use as much exam time as you would like to complete each case.

However, there may be additional case studies and sections on this exam. You must manage your time to ensure that you are able to complete all questions included on this exam in the time provided.

To answer the questions included in a case study, you will need to reference information that is provided in the case study. Case studies might contain exhibits and other resources that provide more information about the scenario that is described in the case study. Each question is independent of the other question on this case study.

At the end of this case study, a review screen will appear. This screen allows you to review your answers and to make changes before you move to the next sections of the exam. After you begin a new section, you cannot return to this section.

To start the case study

To display the first question on this case study, click the Next button. Use the buttons in the left pane to explore the content of the case study before you answer the questions. Clicking these buttons displays information such as business requirements, existing environment, and problem statements. If the case study has an All Information tab, note that the information displayed is identical to the information displayed on the subsequent tabs. When you are ready to answer a question, click the Question button to return to the question.

Background

Current environment

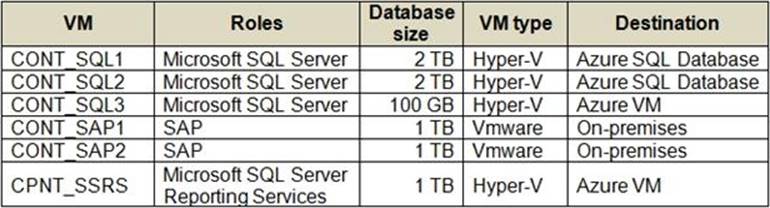

The company has the following virtual machines (VMs):

Requirements

Storage and processing

You must be able to use a file system view of data stored in a blob.

You must build an architecture that will allow Contoso to use the DB FS filesystem layer over a blob store. The architecture will need to support data files, libraries, and images. Additionally, it must provide a web-based interface to documents that contain runnable command, visualizations, and narrative text such as a notebook.

CONT_SQL3 requires an initial scale of 35000 IOPS.

CONT_SQL1 and CONT_SQL2 must use the vCore model and should include replicas. The solution must support 8000 IOPS.

The storage should be configured to optimized storage for database OLTP workloads.

Migration

- You must be able to independently scale compute and storage resources.

- You must migrate all SQL Server workloads to Azure. You must identify related machines in the on-premises environment, get disk size data usage information.

- Data from SQL Server must include zone redundant storage.

- You need to ensure that app components can reside on-premises while interacting with components that run in the Azure public cloud.

- SAP data must remain on-premises.

- The Azure Site Recovery (ASR) results should contain per-machine data.

Business requirements

- You must design a regional disaster recovery topology.

- The database backups have regulatory purposes and must be retained for seven years.

- CONT_SQL1 stores customers sales data that requires ETL operations for data analysis. A solution is required that reads data from SQL, performs ETL, and outputs to Power BI. The solution should use managed clusters to minimize costs. To optimize logistics, Contoso needs to analyze customer sales data to see if certain products are tied to specific times in the year.

- The analytics solution for customer sales data must be available during a regional outage.

Security and auditing

- Contoso requires all corporate computers to enable Windows Firewall.

- Azure servers should be able to ping other Contoso Azure servers.

- Employee PII must be encrypted in memory, in motion, and at rest. Any data encrypted by SQL Server must support equality searches, grouping, indexing, and joining on the encrypted data.

- Keys must be secured by using hardware security modules (HSMs).

- CONT_SQL3 must not communicate over the default ports

Cost

- All solutions must minimize cost and resources.

- The organization does not want any unexpected charges.

- The data engineers must set the SQL Data Warehouse compute resources to consume 300 DWUs.

- CONT_SQL2 is not fully utilized during non-peak hours. You must minimize resource costs for during non-peak hours.

You need to design a solution to meet the SQL Server storage requirements for CONT_SQL3.

Which type of disk should you recommend?