17. Topic 3, Litware, inc

Overview

General Overview

Litware, Inc. is an international car racing and manufacturing company that has 1,000 employees. Most employees are located in Europe. The company supports racing teams that complete in a worldwide racing series.

Physical Locations

Litware has two main locations: a main office in London, England, and a manufacturing plant in Berlin, Germany.

During each race weekend, 100 engineers set up a remote portable office by using a VPN to connect the datacenter in the London office. The portable office is set up and torn down in approximately 20 different countries each year.

Existing environment

Race Central

During race weekends, Litware uses a primary application named Race Central. Each car has several sensors that send real-time telemetry data to the London datacentre. The data is used for real-time tracking of the cars.

Race Central also sends batch updates to an application named Mechanical Workflow by using Microsoft SQL Server Integration Services (SSIS).

The telemetry data is sent to a MongoDB database. A custom application then moves the data to databases in SQL Server 2017. The telemetry data in MongoDB has more than 500 attributes. The application changes the attribute names when the data is moved to SQL Server 2017.

The database structure contains both OLAP and OLTP databases.

Mechanical Workflow

Mechanical Workflow is used to track changes and improvements made to the cars during their lifetime.

Currently, Mechanical Workflow runs on SQL Server 2017 as an OLAP system.

Mechanical Workflow has a table named Table1 that is 1 TB. Large aggregations are performed on a single column of Table1.

Requirements

Planned Changes

Litware is in the process of rearchitecting its data estate to be hosted in Azure. The company plans to decommission the London datacentre and move all its applications to an Azure datacenter.

Technical Requirements

Litware identifies the following technical requirements:

- Data collection for Race Central must be moved to Azure Cosmos DB and Azure SQL Database. The data must be written to the Azure datacenter closest to each race and must converge in the least amount of time.

- The query performance of Race Central must be stable, and the administrative time it takes to perform optimizations must be minimized.

- The database for Mechanical Workflow must be moved to Azure SQL Data Warehouse.

- Transparent data encryption (TDE) must be enabled on all data stores, whenever possible.

- An Azure Data Factory pipeline must be used to move data from Cosmos DB to SQL Database for Race Central. If the data load takes longer than 20 minutes, configuration changes must be made to Data Factory.

- The telemetry data must migrate toward a solution that is native to Azure.

- The telemetry data must be monitored for performance issues. You must adjust the Cosmos DB Request Units per second (RU/s) to maintain a performance SLA while minimizing the cost of the RU/s.

Data Masking Requirements

During race weekends, visitors will be able to enter the remote portable offices. Litware is concerned that some proprietary information might be exposed.

The company identifies the following data masking requirements for the Race Central data that will be stored in SQL Database:

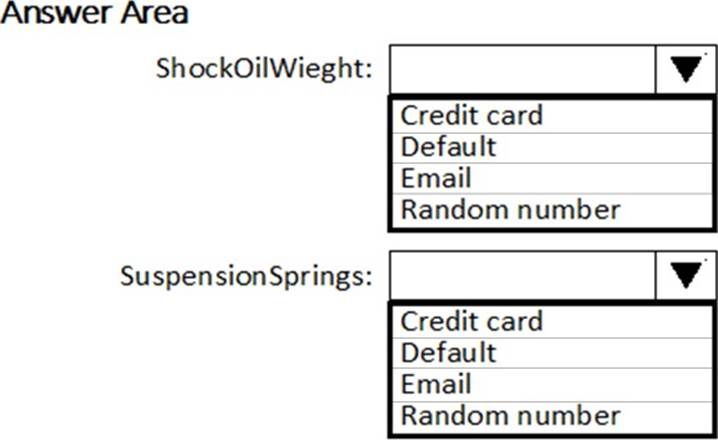

- Only show the last four digits of the values in a column named SuspensionSprings.

- Only show a zero value for the values in a column named ShockOilWeight.

HOTSPOT

Which masking functions should you implement for each column to meet the data masking requirements? To answer, select the appropriate options in the answer area. NOTE: Each correct selection is worth one point.